A little while back I engaged in a conversation with a current student in the Library and Information Science Program at the University of Wisconsin. She’s currently in the middle of an information architecture course and just finished the section on creating sitemaps. Our conversation quickly transitioned into a discussion about testing navigation for websites. Here at Aten, we work collaboratively with stakeholders to create simple, yet compelling navigation systems for their new site. After we have a pretty good idea of what we should be using for the nested sitemap, we use a couple methods to conduct usability tests on the proposed structure—a self-assessed, two-part test to define a baseline level of success and an online survey for real users to complete.

As Justin points out, the simple, two-part test consists of two general questions about the architecture of your site:

This is a great test that you can do on your own or with a group of stakeholders on your team.

The second test, which we’ll get into details about below, uses an online tool to test your navigation. There are a number of tools you can use to test a site structure before getting into the buildout. Treejack, C-Inspector, Naview and UserZoom are a few that we’ve looked at. Each tool is a little different, but they all function pretty much the same.

How to Setup a Test

To create a test, all you need to do is

1. Input your sitemap

Most likely you’ll need to input your sitemap into the tool in outline form:

Some tools let you upload your sitemap using a .csv or Excel file. This is a handy feature if you’ve already created your sitemap in that format.

2. Set up a few tasks

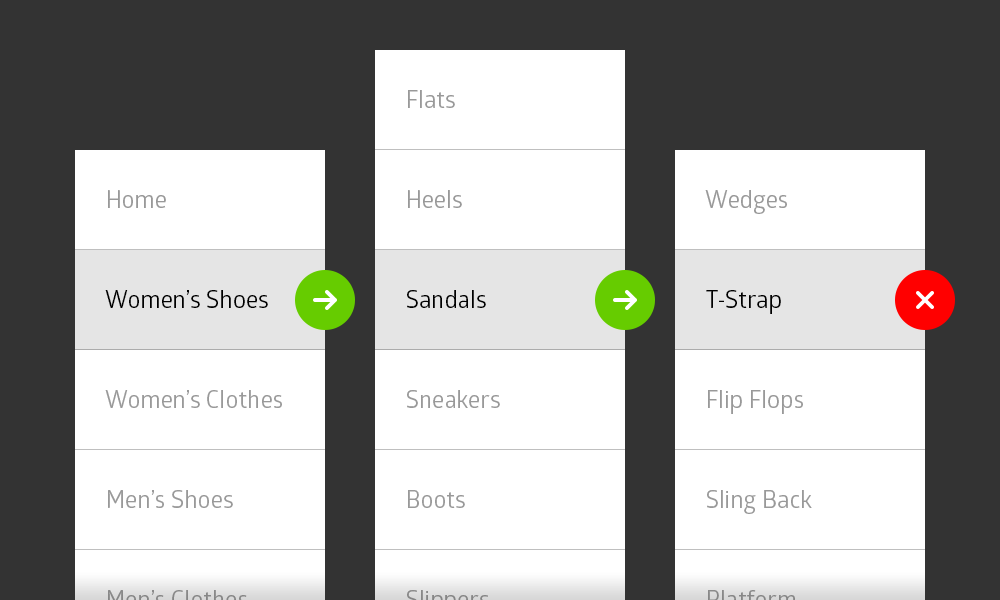

We recommend creating tasks that directly align with personas (characterizations of users) and user stories (statements explaining everyday tasks of users). Create tasks that are actionable and clear, such as “Find a pair of women’s flip flops for under $20.” For more tips on creating good tasks check out Measuring Usability’s post on writing usability task scenarios and the Neilson Group’s post on turning user goals into task scenarios.

In the tool, you’ll need to add both the language of the task and the correct answer(s). That’s right, there may be more than one right answer. Think about all the different ways a user might conduct the task. After setting up the tasks, take the test yourself. Consider having a teammate also take the test. As you go through the test, make sure you can complete every task and that the language is clear.

One last thing, proofread! Make sure your tasks (and sitemap) are free of grammar and spelling mistakes. Many of the systems allow you to include instructions, additional open-ended questions, a welcome screen and a thank you screen. Proofread each and every one of them. I can’t stress this enough. Even small editorial mistakes can hurt your credibility.

3. Recruit participants to carry out the tasks

Ideally, your participants should be real users of your site. If you have a current site, you can recruit users via a popup survey. If you don’t have a site yet, consider inviting individuals that you know who are interested in your site’s message and participating in the surveys. Let the users know that you’re considering or going through a redesign process and value their input.

Working Through the Results

Your testers will run through each task in the test (some systems allow users to skip tasks). The online tool records their final answer and the path they took to get there. Some systems record additional information, such as the amount of time it took them to complete the task at hand.

The systems output the results of the final selection by stating the number and/or percentage of users that found the correct answer. Some systems will give you direct and indirect percentages. A successful test should yield 80-90% accuracy. However, just looking for a percentage can get tricky, especially with certain systems.

For example, adding tasks in Treejack is a little complicated at times. Using the shoe site example from above, we cannot write a task of “Browse a list of all women’s shoes on the site” because Treejack doesn’t allow you to select “Women’s Shoes” as a correct answer. It will only allow you to select the lowest level items as correct answers. Naview has tried to solve this issue by allowing you to select ANY menu item as the correct answer.

This makes testing top level navigation in Treejack hard to do. Here’s our workaround. When using Treejack, either write a more specific task or remove the lower level menu items from the tree. If writing a more specific task, add a detail page (e.g. Sandal Detail Page) to the structure and write the task like this “Find a pair of women’s sandals for under $20.” Since we’re testing the top level navigation item, look at the path rather than the percentage. See if the path states that they chose Women’s Shoes first.

Analyzing the paths each user took can also give you additional insight on whether a sub-menu item is housed in the right section of your site. One thing we’ve noticed is that users will sometimes navigate to the correct spot the first time, but then look elsewhere before selecting their first attempt as the correct answer. This can skew your percentages, especially when comparing direct and indirect results.

I've analyzed my results, now what?

After analyzing all of the results, see if there’s anything to change in the site navigation. It could be a label change or a change in structure. Perhaps Women’s Shoes should be changed to Ladies’ Shoes. Maybe your tests show that combining Women’s Shoes and Women’s Clothes into a top-level menu item called “Women’s” is better for your users. If you make changes to the structure, consider running a second usability test. You can even use some or all of the same tasks. In some cases, you’ll think of additional tasks when reviewing the results. Your results might also show that there’s really nothing to change. Awesome! You can now move on to the next phase of the project.

When to Test

Not every site you build will require navigation testing. In their DrupalCon Austin presentation, Lynn Winter and Drew Gorton go into detail about 9 different times you may want to conduct usability testing. For navigation testing specifically, it’s definitely good to test when complex or high-risk navigation is required, when you need user buy-in to help inform decisions, to backup expertise, and when a task-driven website is needed.

To get the most out of your usability testing, you’ll want to test your site structure early on in the process. This will help to ensure that your navigation aligns with the goals of your site and is intuitive to your users. It doesn’t necessarily have to slow down the project a whole lot. You can still work on content maps and wireframes for individual pages. Just keep in mind that you may need to make changes to labels, combine two pages, add a new page or move a page to a different section. You’ll also need to make time to analyze the results.

There are a number of other usability tests you can run to fully flesh out your new site. For example, we use Chalkmark to continue testing on high-fidelity designs, such as the homepage, article listings and individual pages. Additional testing can be conducted after a prototype is built.

Providing clear and compelling navigation is key to a site’s success. Conducting usability testing early in the project can help make sure your site structure addresses your users’ needs and your organization’s business goals. I encourage you to try out the different navigation tools and see what works best for your next project.