Web applications are often thought of in terms of something you meticulously plan, fund, build, publish, and then — with a greatly reduced ongoing investment — maintain and update until the software struggles to serve its purpose and the process begins all over again. There is a place for that approach. And then there’s the product mindset: build something that works, embrace a perennial rhythm of planning, development, and retrospective, then watch your software grow into something beyond what you could have imagined.

Sustainable development: Persistent cadence & reliable progress

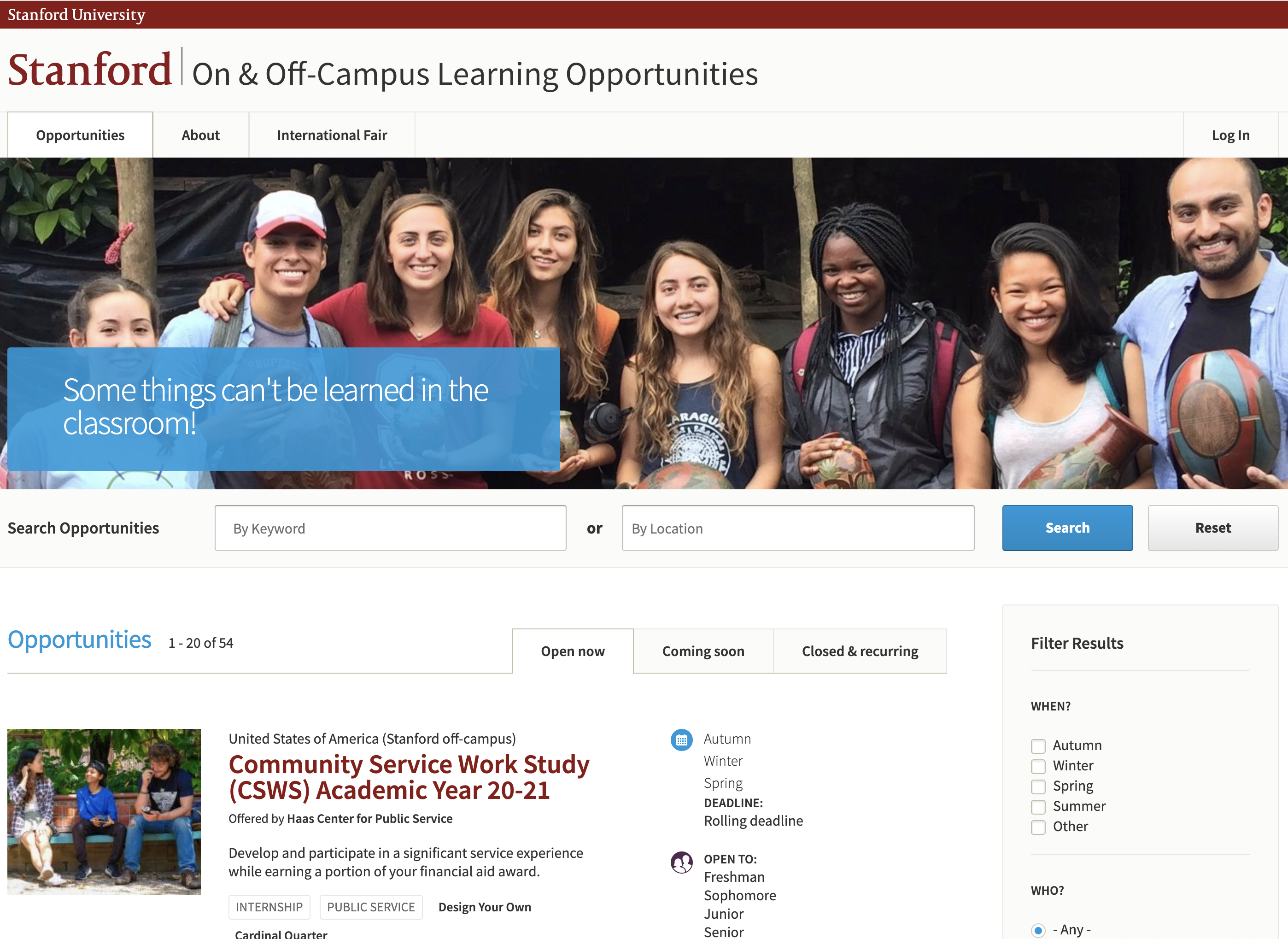

A few days after Christmas in 2015 Aten started work on a prototype of an application workflow management system for Stanford University. Stanford faculty and staff needed to manage student applications to participate in off-campus learning opportunities: research, public service, language studies, internships and the like. Our goal was to build something immediately useful, then iterate around stakeholder feedback to deliver the features most needed by users on an ongoing basis.

Just six weeks later Stanford On & Off-Campus Learning Opportunities (SOLO) was collecting applications for its very first opportunity listings. Development continued at a consistent pace with new feature releases each month. Less than two years later, a need was identified for a second platform aimed at processing faculty applications for internal funding opportunities. Stanford Seed Funding (SSF) — managed by the same team in the same development stream — was launched in the Fall of 2017 to meet that need

In its first year SOLO collected student applications for more than 200 distinct opportunities. Today, five years later, more than 12000 Stanford users have registered to apply for — or manage — more than 1600 opportunities across SOLO and SSF. We continue to release new features each month.

SOLO and SSF have been the beneficiaries of full-scale development since their inception. We’ve run roughly one development sprint each month to deliver new features and new functionality, and to engage with wider audiences and better serve various units of Stanford University. The platforms have grown exponentially in both adoption and available features. What began as essentially a custom application form builder has expanded to include:

- Full-featured application workflow management

- A robust email notifications system

- Third party application review management

- Workload, task & content sharing via user teams

- Automatic student record and faculty profile integrations via secure APIs

- Program and university level reporting

- A host of specialized dashboards to manage content

- Specialized travel risk assessment workflows, integrated with live State Department Travel Advisory data

- Application data downloads as CSV or automagically generated PDF

While our team roster gets shaken up depending on the demands of each particular sprint (interface design, QA, accessibility, information architecture), the product owner on the Stanford side and lead developer on the Aten side have been the same since day one. That’s not where the commitment to continuity ends, either.

For more than five years our process has embraced three major elements loosely organized along about-a-month cycles — a rhythm I believe is largely responsible for the ongoing success of the project.

Stakeholder engagement and planning

Stakeholder engagement and planning is a huge part of the two-person Stanford team’s full time job, and direct communication between developers and stakeholders has played a significant role as well. We’ve conducted feature planning activities with the staff teams who publish opportunities on the site, held meetings with IT Services personnel to facilitate better implementation of Stanford’s various APIs, and made a habit of hopping on ad hoc Zoom calls with faculty, staff, and students to troubleshoot, explore feature requests, or better understand pinch-points in our various workflows.

Whether it’s Stanford students, faculty, or staff, our combined Stanford / Aten team has been proactive and ambitious in engaging the people who use (or benefit from) the SOLO and SSF platforms — a cornerstone principle of user centered systems design. The results of those interactions, conversations, workshops, and meetings feed into a product backlog that’s home to hundreds of feature requests, ideas, suggestions, and research topics in varying stages of evolution, all maturing towards “development ready” items which will be earmarked for future sprints.

Dedicated sprint planning sessions organize “development ready” backlog items into upcoming sprints, accompanied by clear acceptance criteria and often supported by screenshots, sketches, mock-ups or additional notes & documentation.

Development

During our roughly two-week development cycles, team communication that’s not centered around the current sprint’s development goals spins down considerably. Developers (myself & whomever else we’ve recruited for the sprint) are given plenty of uninterrupted time to focus on our tasks, punctuated with limited scheduled meetings to present works-in-progress, collect feedback, or begin exploratory conversations on tasks destined for future sprints.

In addition to regularly building new features, sprints also make considerable space for best practice “housekeeping” tasks. Code refactoring (revisiting & improving old code) helps us to remove some of the inefficiencies and obfuscations in older code that are inherently introduced by constant new development. This is especially important in rapid cycle development where large features go from whiteboard to production in 3-6 weeks.

Automated testing (both revising existing tests and building new ones for new features) is also an important part of a healthy lean development practice. Our automated tests both subsidize human QA, and reduce the likelihood of butterfly-effect bugs where old features break when new code is introduced.

Retrospective

Perhaps the most important element of maintaining quality and velocity in long term development projects is self-examination. As a team, it’s incredibly important to set aside time to reflect on our processes, examine our opportunities for improvement, and celebrate our wins.

Success with SOLO & SSF, like any web application built for a diverse and evolving user base, is a moving target. After each development cycle the combined Stanford / Aten team sets aside time to reflect on the sprint, recognize our successes, and explore our opportunities for improvement. These can be concrete process adjustments (don’t deploy on Fridays, for example), loose guidelines (seek to involve team members from various disciplines, when possible), or simply reiterating and underlining what worked well and should be repeated. The perfect process doesn’t exist, and retrospectives aren’t an attempt to find it. Instead, the goal is active adaptation to the ever changing demands of a living, evolving project.

This spring we’ll finish our sixtieth SOLO & SSF development sprint, after more than five years of ongoing stakeholder engagement, planning, development, and reflection. It’s such a delight working with Stanford’s Research IT & Innovation team, helping to support their mission of facilitating research, and actively engaging with the extraordinary humans at the center of the platforms. In an ideal world all developers would have the pleasure of working with similarly motivated, dedicated, and flexible teams with tools and processes to match.